mistory

The Emerging Role of AI in

Primary Eye Care

Artificial intelligence (AI) refers to a machine-based system that attempts to replicate human cognitive function.1 In health care, the role of AI has gradually developed from attempting to replicate complex human decision making into making significant predictions for patient outcomes and management. In this article, Dr Jack Phu and Henrietta Wang consider areas where AI can take a role in eye health care – and where it shouldn’t.

WRITERS Dr Jack Phu and Henrietta Wang

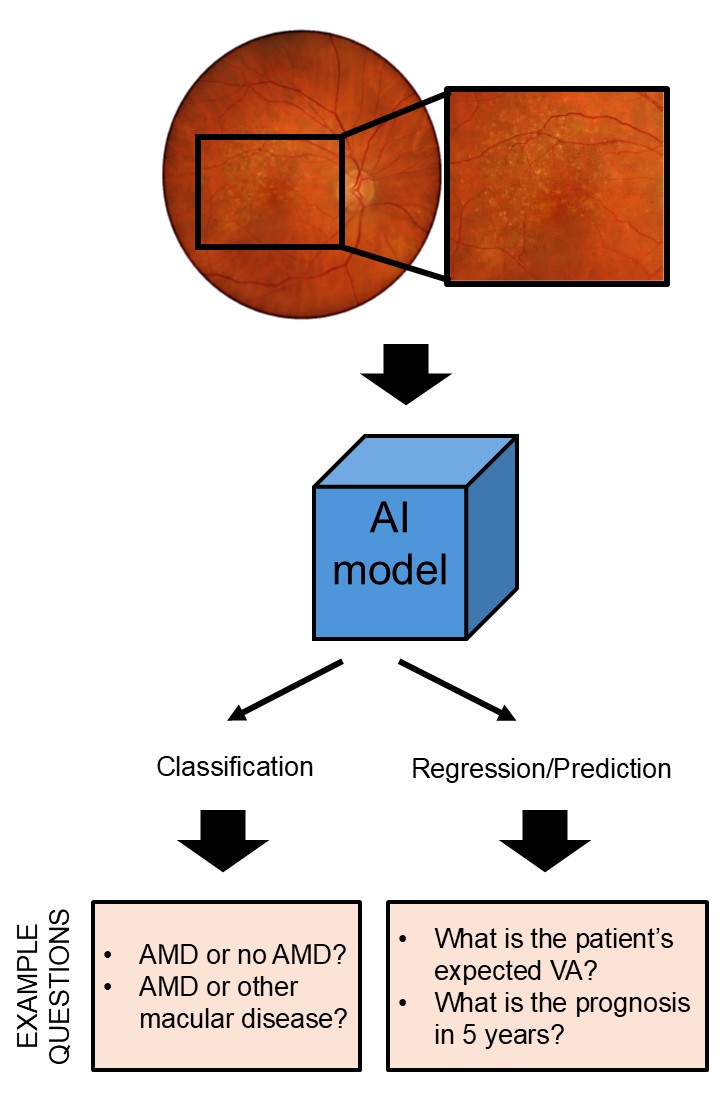

Figure 1. A basic framework for understanding the potential role of AI models in addressing clinical problems (classification versus regression/prediction).

Within the broad term of AI, there are escalating levels of complexity, including machine learning (ML), referring to the ability of a machine to learn without explicit programming, and deep learning (DL), referring to its ability to learn using multilayered networks (resembling a brain).2

Currently in health care, we can consider two broad problems that AI attempts to address.3 First, we have the problem of classification. This problem refers to questions such as whether a patient has a condition or not, or in differential diagnosis between several possible conditions. Second, we have the problem of regression and prediction. Here, AI attempts to uncover factors that might be contributing to a health outcome, or to use data to predict the likelihood of an outcome.

We can use age-related macular degeneration (AMD) as an example of AI application. Using fundus photography, we can ask a classification question: “Does this patient have AMD?” For the regression/prediction problem, we can ask: “What is the patient’s expected visual acuity?” (Figure 1). This represents a relatively simple clinical problem. However, with its ability to efficiently integrate large volumes of clinical data, the role of AI may be expanded to tackle more complex presentations that may be cognitively burdensome for the human clinician.

Despite the proffered advantages of AI, there are also barriers to implementation. Clinicians need to be aware of the strengths and limitations of AI in clinical practice. Herein, we outline some topics related to the application of AI in primary eye care.

AI IN DISEASE DETECTION AND DIFFERENTIAL DIAGNOSIS

Glaucoma represents an interesting clinical problem as it uniquely suffers from both underdiagnosis and overdiagnosis.4 This diagnostic dilemma persists despite the wealth of clinical data for patients. In brief, data collected as part of a comprehensive, routine glaucoma examination include a clinical history, intraocular pressure (IOP), corneal thickness, gonioscopy/anterior chamber angle parameters, perimetry data, fundus examination and photography, and retinal imaging.5 Within these clinical techniques, there is also a dearth of qualitative and quantitative data used by clinicians for clinical decision making.

Here, the role of AI could be in terms of classification: identification of glaucoma from normal, and separating glaucoma from other diseases. This could be considered from the perspective of individual screening techniques (such as perimetry or fundus photography in isolation), or a multimodal approach.

There has been a considerable range in outcomes, such as sensitivity, specificity, and accuracy, for glaucoma diagnosis when using individual techniques. In general, these outcomes range from 85–95%.4,6-8Of note, these studies would often compare the diagnostic ability of ophthalmologists in glaucoma diagnosis using these images. Often, ophthalmologists would perform poorer in comparison to an AI system.9 However, there are several caveats here. First, the apparent superior performance of AI relative to a group of ophthalmologists may be a representation of better internal consistency. Second, diagnostic outcomes such as sensitivity and specificity are wholly dependent on the underlying ground truth data set. Such data sets are often carefully curated for internal consistency, with stringent requirements on agreement. Third, using techniques, such as visual field results and a single fundus photograph in isolation is not a true representation of routine glaucoma care. Specifically, visual field data is often subjective, and fundus photography is vulnerable to subjective interpretation. This outcome is, therefore, not surprising.

When using retinal imaging, such as optical coherence tomography (OCT), diagnostic accuracy levels reported in the literature have been similar to the above (generally above 90%).10 Additionally, studies have highlighted the importance of image integrity, whereby lower quality input images result in dramatically lower diagnostic accuracy (77%).11

Generally, multimodal approaches, such as combining fundus photographs and visual field results, lead to improvements in diagnostic accuracy.6,12 This is consistent with clinical approaches, and the natural history of glaucoma, which may show discordance between structure and function at times.13

Although the studies referenced above tend to report high diagnostic accuracy using AI approaches, there is also an effect of disease severity, whereby classifiers have higher accuracy with more severe stages of glaucoma compared to early disease.14 This is also consistent with diagnostic challenges in clinical practice. Since moderate or severe levels of glaucoma demonstrate overt signs of disease, the application of AI is questionable, as it does not clearly distinguish between early disease and glaucoma suspects.

“until questions have been answered, about whether the benefits of AI outweigh the risks of use, there is a limited role for AI in primary eye care”

In that vein, several studies have investigated the role of AI in differential diagnosis. Again, reported results tend to demonstrate a high ability of AI to differentiate between glaucoma and non-glaucomatous optic neuropathy. Another advantage of AI in this context is improved internal consistency compared to human clinicians. However, these studies have similar limitations to those discussed above: they are often pre-seeded with ‘obvious’ cases that are not diagnostic challenges. There are also limited data sets overall, and non-glaucomatous optic neuropathies are often grouped together, rather than being regarded distinctly.

Despite the high levels of diagnostic accuracy reported internally within studies, AI studies in general suffer from issues of external validity. External validity refers to the application of the model to data sets outside of the experimental condition, and is a method for assessing real-world applicability. Unfortunately, there is a tendency for studies to report high interval validity, but much poorer external validity. Several reasons for this have been described, including differences in demographics of the data set, the difference in rigour for obtaining the data, and reliability of external data sets. Reducing diagnostic accuracy by 10–20 percentage points significantly limits the deployment of AI models in clinical settings.

AI IN RISK ASSESSMENT, DISEASE PROGRESSION, AND PROGNOSIS

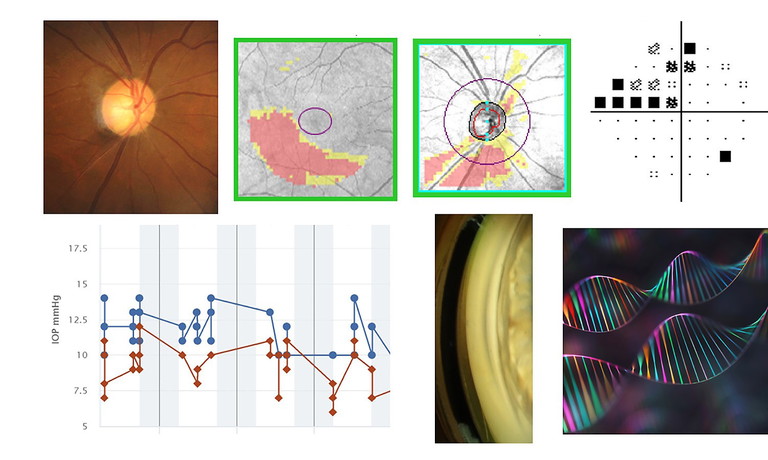

As mentioned, another broad role of AI in health care is prediction/regression. Again, using glaucoma as an example, the question is whether AI can use input data to determine risk of developing glaucoma, its progression, and therefore prognosis (Figure 2).

Although AI can integrate multimodal clinical data, to date, the results for predicting progression and risk have been more modest compared to diagnostic questions. In general terms, short-term progression and risk, e.g. months to a few years, may be assessable using AI, but after this short time period, the precision and accuracy of predictions worsen dramatically. Studies have used a prediction approach to stratify or personalise care by making predictions on glaucoma severity given different levels of intraocular pressure (IOP) control. Again, estimates tend to be more robust in the short term, but are likely to have limited clinical utility in the long term.

Figure 2. Examples of clinical data that could be integrated into AI models in glaucoma, including colour fundus photography, OCT, standard automated perimetry data, IOP, anterior chamber angle parameters, and genetic information.

The role of AI in predicting outcomes suffers from similar limitations to classification problems. Limited availability of robust longitudinal data sets remains an issue in developing robust prediction models. It is also difficult to evaluate the clinical significance of the changes predicted by AI.

AI IN LANGUAGE PROCESSING AND REPORTING

An emerging use of AI relates to large language models (LLMs), which are systems trained to understand, generate, and process human language. Many commercial models exist and have been leveraged in various fields to provide responses to questions essentially in real time.

One potential role of LLMs is to answer patient questions related to disease. This has been explored in a variety of ophthalmic conditions. Our research group has recently examined the role of LLMs in glaucoma and AMD.15,16 We used a panel of experts to evaluate the LLM responses to frequently asked questions related to these diseases and judged them on cohesion, accuracy, comprehensiveness, and safety. Although most responses were adequate, we raised several major concerns, including out-of-date information, irrelevant information, and notable omissions in the responses.

We and others have flagged key limitations in the use of LLMs in a patient-facing capacity.15-17 First, depending on the LLM, some of its source material may be out of date. At best, this may make the information irrelevant, but it may also be dangerous, depending on the context. Second, it lacks comprehensiveness. Key phenotypes and details of subtypes of diseases are often neglected, such as secondary open angle glaucoma. The issue is that patient-users are unlikely to be experts in the field and will likely fail to recognise important omissions in information. Third, it is relatively impersonal. Short of providing actual clinical data inputs into the LLM, the responses are not tailored to individual patients, which limits utility.

PRACTICAL ISSUES WITH AI IN HEALTH CARE

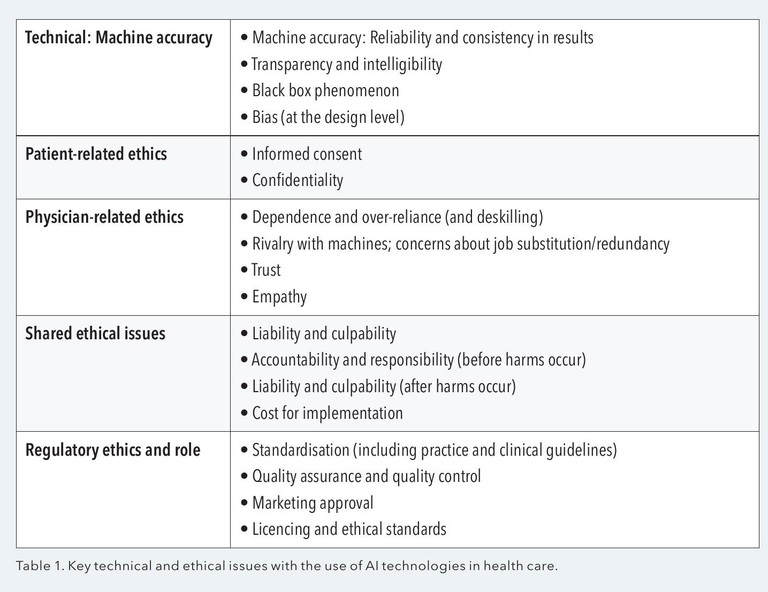

The sections above have alluded to some limitations of deploying AI for clinical use. Broadly, we can frame the limitations of AI from several ethical perspectives: technical, patient-related, physician/clinician-related, shared ethics, and regulatory bodies.18,19 These are summarised in Table 1.

The recognition of these limitations has led to advancements in the field. Explainable AI is an emerging approach to reconciling the ‘black box’ effect of AI. For example, AI models in glaucoma may use approaches to flag features of interest to explain the outputs, such as the inferotemporal vulnerability zone, the optic cup, and the trajectory of the retinal nerve fibre layer. Regulatory bodies, such as the United States Food and Drug Administration and Australia’s Therapeutic Goods Administration, are releasing recommendations and position statements on the use of AI in clinical practice.

However, several critical issues remain unresolved. These include liability/culpability, accountability, and cost of deployment. For example, if a clinician relies on an AI system for diagnosis and management, where does the responsibility lie? There are also issues with patient confidentiality and whether informed consent procedures adequately cover the potential unauthorised dissemination and use of sensitive patient information when uploaded into AI systems. We have recently raised this issue as well.20

Therefore, until questions have been answered, about whether the benefits of AI outweigh the risks of use, there is a limited role for AI in primary eye care. We caution against the injudicious use of AI in routine clinical practice. Patients whose data are to be put through AI technology must be informed.

Dr Jack Phu MD PhD BOptom (Hons) Diplomate (Glaucoma) is a clinician scientist, lecturer at University of New South Wales Sydney, adjunct professor at University of Houston College of Optometry and junior medical officer at Bankstown-Lidcombe Hospital. His basic and clinical sciences research program focusses on improving the understanding of glaucoma and retinal disease and its diagnosis and management in clinical practice.

Henrietta Wang BOptom (Hons) BSc MPH FAAO is a clinician-researcher and senior staff optometrist at Centre for Eye Health. Her clinical, teaching and research duties are focussed on ocular diseases, imaging and collaborative care models. She has served on numerous national and international professional committees to advance the profession.

References available at mivision.com.au.